𝗔 𝗗𝗲𝗲𝗽 𝗗𝗶𝘃𝗲 𝗶𝗻𝘁𝗼 𝗤𝘂𝗲𝗿𝘆 𝗘𝘅𝗲𝗰𝘂𝘁𝗶𝗼𝗻 𝗘𝗻𝗴𝗶𝗻𝗲 𝗼𝗳 𝗦𝗽𝗮𝗿𝗸 𝗦𝗤𝗟

🚩𝗤𝘂𝗲𝘀𝘁𝗶𝗼𝗻

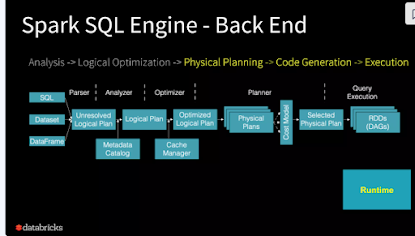

𝗔 𝗗𝗲𝗲𝗽 𝗗𝗶𝘃𝗲 𝗶𝗻𝘁𝗼 𝗤𝘂𝗲𝗿𝘆 𝗘𝘅𝗲𝗰𝘂𝘁𝗶𝗼𝗻 𝗘𝗻𝗴𝗶𝗻𝗲 𝗼𝗳 𝗦𝗽𝗮𝗿𝗸 𝗦𝗤𝗟

🚀𝗨𝗻𝗿𝗲𝘀𝗼𝗹𝘃𝗲𝗱 𝗟𝗼𝗴𝗶𝗰𝗮𝗹 𝗣𝗹𝗮𝗻

👉🏻Syntactic (syntax check)

👉🏻Symantic verification (object should be proven)

👉🏻Parsing activity should be checked.

🚀𝗦𝗰𝗵𝗲𝗺𝗮/𝗠𝗲𝘁𝗮𝗱𝗮𝘁𝗮 𝗖𝗮𝘁𝗮𝗹𝗼𝗴𝘂𝗲

👉🏻Datatype, schema details should be taken.

🚀𝗟𝗼𝗴𝗶𝗰𝗮𝗹 𝗣𝗹𝗮𝗻

👉🏻Catalyst optimizer written in Scala in the form of a tree.

👉🏻Each tree will contain nodes. Each node has a child node. Each node contains rules based on the form of a tree.

👉🏻Each node has a role-based optimization there.

👉🏻Number of logical plans to be executed

🚀𝗢𝗽𝘁𝗶𝗺𝗶𝘇𝗲𝗱 𝗟𝗼𝗴𝗶𝗰𝗮𝗹 𝗣𝗹𝗮𝗻

👉🏻Related activities to be grouped as a micro batch

👉🏻Predicate pushdown

👉🏻Projection pushdown

👉🏻Rearrange the filter

👉🏻Conversion of decimal operations to integer operations

👉🏻Replacement of some regex expressions by Java's methods

👉🏻If-else clause simplification

🚀𝗣𝗵𝘆𝘀𝗶𝗰𝗮𝗹 𝗣𝗹𝗮𝗻𝘀

👉🏻Optimizer constructs multiple physical plans from an optimized logical plan.

👉🏻A physical plan defines how data will be computed.

👉🏻The plans are also optimized.

👉🏻The optimization can combine/merge different filters, sending predicates pushdown directly to data source to eliminate some data at data source level.

🚀𝗦𝗲𝗹𝗲𝗰𝘁𝗲𝗱 𝗣𝗵𝘆𝘀𝗶𝗰𝗮𝗹 𝗣𝗹𝗮𝗻𝘀

👉🏻Optimizer determines which physical plan has the lowest cost of execution and 👉🏻chooses that plan for computation.

👉🏻Cost is a concept or 𝗺𝗲𝘁𝗿𝗶𝗰 𝘂𝘀𝗲𝗱 to 𝗲𝘀𝘁𝗶𝗺𝗮𝘁𝗲 𝘁𝗵𝗲 𝗰𝗼𝘀𝘁 𝗼𝗳 𝘁𝗵𝗲 𝗽𝗹𝗮𝗻𝘀.

🚀𝗘𝘅𝗲𝗰𝘂𝘁𝗶𝘃𝗲 𝗡𝗮𝘁𝗶𝘃𝗲 𝗥𝗗𝗗

👉🏻Optimizer generates Java bytecode for the best physical plan. The generation is made possible thanks to Scala's feature called "𝗾𝘂𝗮𝘀𝗶𝗾𝘂𝗼𝘁𝗲𝘀".

👉🏻This step is optimized by code-based optimization.

👉🏻Catalyst with Special Feature of Scala Language - Quasiquotes

👉🏻Makes code generation easier.

👉🏻𝗤𝘂𝗮𝘀𝗶𝗾𝘂𝗼𝘁𝗲𝘀 let the programmatic construction of abstract syntax trees (ASTs) in the scala language which can be fed into scala compiler at runtime to generate code.

#dataengineer

#Pyspark

#Pysparkinterview

#Bigdata

#BigDataengineer

#dataanalytics

#data

#interview

#sparkdeveloper

#sparkbyexample

#pandas

Comments

Post a Comment